Geometric Constants¶

This is about several constants related to the geometry of the network.

n_input¶

Each of the at maximum n_steps vectors is a vector of MFCC features of a

time-slice of the speech sample. We will make the number of MFCC features

dependent upon the sample rate of the data set. Generically, if the sample rate

is 8kHz we use 13 features. If the sample rate is 16kHz we use 26 features…

We capture the dimension of these vectors, equivalently the number of MFCC

features, in the variable n_input. By default n_input is 26.

n_context¶

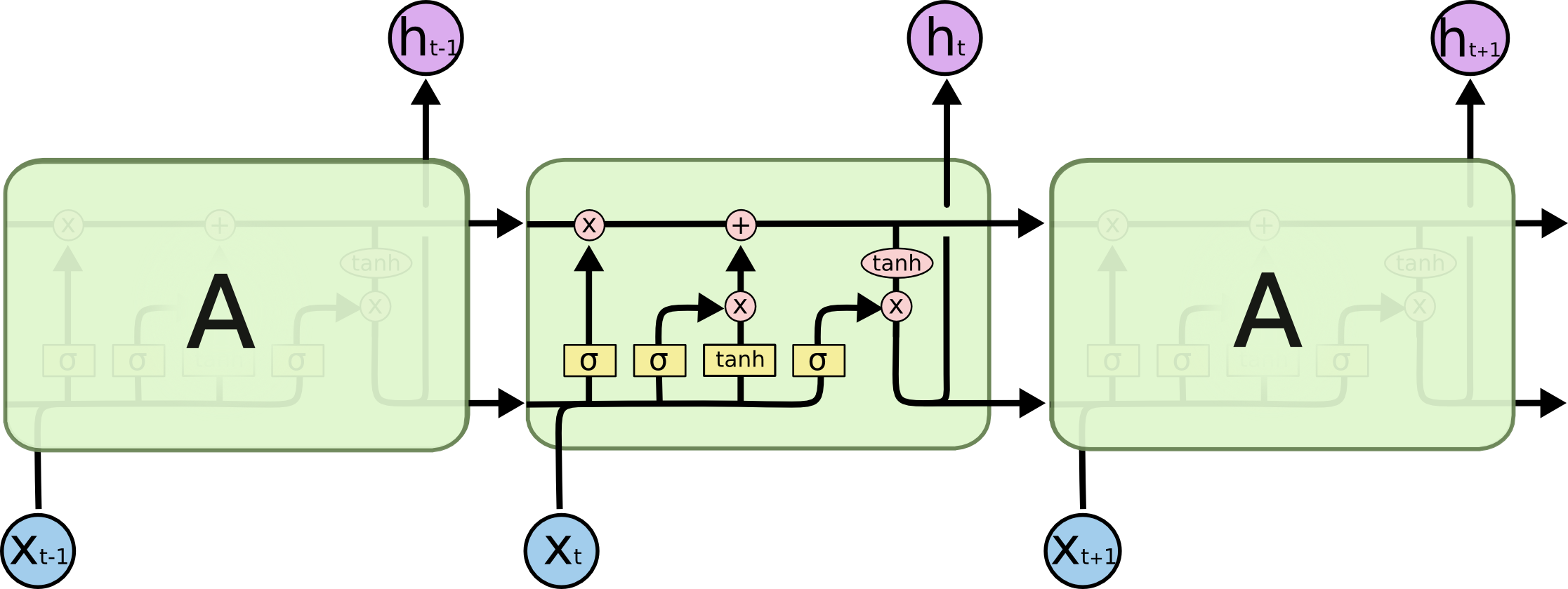

As previously mentioned, the RNN is not simply fed the MFCC features of a given

time-slice. It is fed, in addition, a context of \(C\) frames on

either side of the frame in question. The number of frames in this context is

captured in the variable n_context. By default n_context is 9.

Next we will introduce constants that specify the geometry of some of the non-recurrent layers of the network. We do this by simply specifying the number of units in each of the layers.

n_cell_dim¶

Hence, we are free to choose the dimension of this cell state independent of the

input dimension. We capture the cell state dimension in the variable n_cell_dim.